The OpenAI API ecosystem continues to evolve at a rapid pace. While many developers have become comfortable with the Chat Completions API, there’s a new player in town that offers more flexibility and powerful capabilities. The Responses API represents the next generation of OpenAI’s interface, combining the ability to handle both text and image inputs, manage ongoing conversations, and integrate with a variety of built-in and external tools. In this article, we’ll explore how this API works, what makes it different from its predecessors, and how you can leverage its features—from web search integration to reasoning models—to build more sophisticated AI applications.

This article is based on the lesson from the e-learning course Machine Learning, Data Science, and AI Engineering with Python, which provides comprehensive insights into using the OpenAI Responses API.

Meet the Responses API

Initially, there was the Completions API, which was then replaced by the Chat Completions API. Today, we have the Responses API, which is the latest and most advanced way to utilize the OpenAI API.

Flexible Inputs, Outputs, and Ongoing Conversations

The Responses API is simpler and more flexible, offering enhanced capabilities. It accepts both text and image inputs, and outputs can be in text or structured JSON format. While images can be generated using external tools, the API’s built-in features for managing conversation state make it easier to maintain ongoing dialogues. Each response can reference previous interactions, allowing for seamless conversation continuity. Additionally, the API supports streaming, providing real-time response feedback for a more interactive experience.

Integrating Powerful Tools

The Responses API supports a variety of tools, enhancing its flexibility. Like the Chat Completions API, you can incorporate your own functions and build custom tool capabilities. Additionally, the Responses API offers several built-in tools. For instance, it includes a web search tool that allows you to access real-time information from the web, beyond the model’s pre-existing data. It can also perform file searches if you provide the necessary files, offering a straightforward way to implement retrieval-augmented generation (RAG) techniques. Furthermore, the API includes an image generation tool, although using this feature requires organizational validation through an identity check. This means you can utilize the latest GPT image model within the Responses API without needing the separate Image API.

Connecting with External Systems via MCP

The Responses API includes the ability to connect with remote Model Context Protocol (MCP) servers. MCP allows organizations to expose their data capabilities to AI systems in a straightforward manner. For example, you can interface with GitHub to retrieve information about a repository using GitHub’s MCP server. Similarly, a Shopify MCP server might enable you to add items to a shopping cart using plain English commands, such as “Add this item with this item ID to this cart ID.” The MCP protocol informs OpenAI about the functions and usage of these external systems, making it a powerful new capability.

Code Execution and Computer Control

The Responses API includes a code interpreter tool that allows you to write and execute code as part of the response. Additionally, it offers computer control capabilities, although this feature is still in its early stages and requires significant approvals due to its potential for misuse. This functionality enables the API to control a browser or other desktop applications by analyzing screenshots to determine which actions to take. While this opens up vast possibilities for automation, it also presents risks, highlighting the need for careful implementation.

The Power of Reasoning Models

The Responses API supports reasoning models, such as o3 and o1, which allow you to specify the level of reasoning effort—low, medium, or high—in your requests. These models use reinforcement learning to break down complex tasks into a “chain of thought,” effectively transforming high-level goals into actionable subtasks. This approach is similar to traditional language models but is enhanced by training on examples of problem decomposition.

Reasoning models are particularly useful for coding complex systems, refactoring, and planning large code bases. They excel in STEM research, such as identifying new compounds for antibiotics in biotech. Essentially, these models act like a senior-level employee, capable of handling complex tasks, whereas non-reasoning models are more akin to junior-level employees.

Cost Considerations for Reasoning

Reasoning models in the Responses API can quickly accumulate tokens, known as reasoning tokens, as they break down tasks into a chain of thought. While these tokens are crucial for the model’s internal processing, they are not visible to users. To manage costs, you can set a max_output_tokens parameter to limit the number of tokens generated. However, reaching this limit may result in incomplete responses, so it’s important to experiment with this setting to balance cost control and response completeness.

Additionally, obtaining a summary of reasoning tokens requires organization verification. This feature is not demonstrated in the hands-on example due to these requirements.

Hands-on Examples with the Responses API

Let’s explore some hands-on examples to see how the Responses API operates in various scenarios. We’ll walk through code snippets that demonstrate its capabilities. To begin, we create an OpenAI client, ensuring that the API key is stored in an environment variable for seamless access.

import os, base64, json

from openai import OpenAI

client = OpenAI()

To simplify future updates as new models are introduced, we define the models centrally. Currently, we’re using GPT-4o and the o4-mini reasoning model to save on costs. As new models become available, you can update these definitions accordingly.

model_name="gpt-4o"

reasoning_model_name="o4-mini"

Let’s begin with a straightforward example of using the Responses API to get a text response from a simple prompt. To create a response request, we use client.responses.create, specifying the model we want, such as GPT-4o. The input is the prompt itself: “Who is this Frank Kane guy on Udemy anyhow?”

We can also include additional parameters, such as instructions, which act as a system prompt. For instance, we might instruct it to “always talk like a pirate.” When we receive the full response, it may include several parameters. We’ll format this response neatly and print out the actual output text.

The output can be a bit complex, as it may contain multiple outputs. Each output element can have several content elements. To simplify, we’ll extract the first output element and its first content element to display the text.

# Simple text prompt and response...

response = client.responses.create(

model=model_name,

input="Who is this Frank Kane guy on Udemy anyhow?",

instructions="Always talk like a pirate."

)

print("\nFull response:")

parsed = response.to_dict()

print(json.dumps(parsed, indent=2))

print("\nText only:")

print(response.output[0].content[0].text)

Analyzing Images with the API

In this section, we’ll explore how to use an image input with the Responses API. This process involves two main steps. First, you need to provide the image to the API. You can do this by uploading the image file using the Files API. For example, if you have an image file named bird.png, you would upload it and receive a file ID in return. This ID is crucial as it allows the API to reference the image.

The purpose of the file must be specified as “vision,” indicating that it’s an image for analysis. In our example, bird.png is a picture of a bird taken in a backyard, and we want the API to identify the bird.

Next, you create a response request using client.responses.create. You pass in the model and the input in JSON format. The input includes both text and image data. The text asks, “What kind of bird is this?” and the image is referenced by the file ID obtained earlier. This combination of text and image input will yield a text output, which should identify the bird. Let’s see if the API gets it right.

# Image input, text output

file_response = client.files.create(file=open("bird.png", "rb"), purpose="vision")

response = client.responses.create(

model=model_name,

input=[{

"role": "user",

"content": [

{"type": "input_text", "text": "What kind of bird is this?"},

{

"type": "input_image",

"file_id": file_response.id,

},

],

}],

)

print("\nImage query:")

print(response.output_text)

Real-time Information with Web Search

Let’s explore how to use built-in tools with the Responses API, specifically the web search tool. This tool allows you to obtain real-time information, such as the current stock price of a company like Udemy. To use this feature, you need to specify an array of tools in your request. This array can include multiple tools, and the API will determine the most suitable one for your query. In this example, we use the web search preview tool, which accesses the internet to provide up-to-date answers, rather than relying solely on pre-existing training data. This capability allows you to receive current information directly from the web.

#Using a built-in tool

response = client.responses.create(

model=model_name,

tools=[{"type": "web_search_preview"}],

input="What is the current stock price of UDMY?"

)

print("\nUsing the built-in web search tool:")

print(response.output_text)

Building Conversational Flows

In this example, we demonstrate how to maintain conversation state using the Responses API. We start by asking, “What is 5 plus 4?” and use the response ID from this query to inform a subsequent request. By passing the previous response ID into the next query, we can chain responses together. For instance, after receiving “9” as the answer to the first question, we can ask, “Add three more to that.” The API will then provide “12” as the answer, demonstrating its ability to maintain context across multiple interactions. This feature allows for seamless conversational flows where each response builds on the previous ones.

#Conversation state (chaining responses together)

print("\nConversation state demo:")

response = client.responses.create(

model=model_name,

input="What is 5 + 4?",

)

print(response.output_text)

second_response = client.responses.create(

model=model_name,

previous_response_id=response.id,

input="Add 3 more to that.",

)

print(second_response.output_text)

Integrating External Data with MCP

The Model Context Protocol (MCP) is another powerful tool you can use with the OpenAI API. MCP allows external servers to define and expose their capabilities, which the API can then utilize. This makes integration straightforward. You simply specify the model and the MCP tool, providing a name and a URL for the server.

For instance, using GitHub’s MCP server, you can directly query information about the PyTorch project. By passing in a URL like gitmcp.io/username/repository, the MCP tool communicates with the server to understand its capabilities. If you ask, “How do I compile PyTorch with CUDA support?”, the tool will search the repository and return relevant information based on the current GitHub data.

While MCP simplifies accessing external data, it’s important to manage security and costs, as tool calls can become complex and expensive. You might need to specify which tools within an MCP server are permissible to use. For now, we’ll demonstrate its functionality by printing the full response to confirm the tool’s interaction and then display the final output text suitable for an end user.

#MCP demo

resp = client.responses.create(

model=model_name,

tools=[

{

"type": "mcp",

"server_label": "gitmcp",

"server_url": "https://gitmcp.io/pytorch/pytorch",

"require_approval": "never",

},

],

input="How do I compile PyTorch with CUDA support?",

)

print("\nMCP (model context protocol) usage:")

print("\nFull response:")

parsed = resp.to_dict()

print(json.dumps(parsed, indent=2))

print("\nOutput only:")

print(resp.output_text)

Solving Complex Problems with Reasoning

Let’s explore a reasoning demo using the Responses API. We’ll use a scenario involving retirement planning to demonstrate the capabilities of the reasoning model, specifically the o4-mini model. In this example, we consider a hypothetical 50-year-old with a million dollars in savings, seeking advice on when he can retire safely. While this model provides insights, it’s important to note that it should not be used for actual financial planning, as results can vary with each run.

The reasoning model attempts to break down complex tasks into subtasks, forming a “chain of thought” to arrive at a solution. You can specify the level of reasoning effort—low, medium, or high—depending on your needs. In this case, we’ll use a medium effort level. Additionally, if your account has organizational verification, you can request a summary of the reasoning process.

We’ll input the problem specifics and examine the full response to understand the reasoning tokens involved. The output will include a summary of the reasoning process and the final answer. Let’s see how the model tackles this problem.

#Reasoning demo

prompt = """

Create a retirement plan for a 50-year-old male of average health in the United States.

Assume he has the maximum social security benefits, and that the expected reduction in benefits in 2033 occurs.

His current liquid assets saved are $1 million. How much more must he save each year in order to retire at age

age 60, or age 65? His retirement goal is to survive until death on $100K per year, adjusted for inflation.

"""

response = client.responses.create(

model=reasoning_model_name,

reasoning={"effort": "medium"}, # For a reasoning summary, you would add "summary": "auto" - but this requires org. verification

input=[

{

"role": "user",

"content": prompt

}

]

)

print("\nReasoning demo:")

print("\nFull response:")

parsed = response.to_dict()

print(json.dumps(parsed, indent=2))

print("\nOutput text only:")

print(response.output_text)

Embracing the Future with the OpenAI Responses API

The OpenAI Responses API represents a significant evolution in how developers can interact with AI models. We’ve explored its flexible input/output capabilities, built-in tools like web search and code interpretation, integration with external systems via MCP, and the powerful reasoning models that can tackle complex problems. This API combines the best features of its predecessors while adding new capabilities that make building sophisticated AI applications more accessible. By understanding these features, you’re better equipped to create more dynamic, context-aware applications that can handle a wider range of tasks. Thank you for taking the time to learn about this powerful new tool in the OpenAI ecosystem.

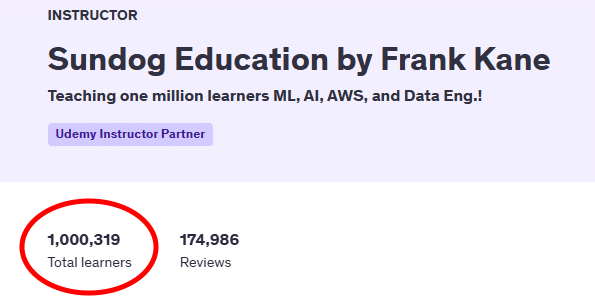

If you found this overview of the OpenAI Responses API valuable, there’s much more to discover in the complete Machine Learning, Data Science, and AI Engineering with Python course. The full course dives deeper into practical implementations, provides hands-on exercises with real-world applications, and covers additional topics like fine-tuning GPT models, advanced RAG techniques, and building LLM agents. With 20 hours of comprehensive content, you’ll gain the skills needed to implement these technologies in your own projects and stay at the forefront of AI development.

About the author:

Frank Kane brings his 9 years of experience as a developer at Amazon and IMDb to this course, offering insights that only come from working at the cutting edge of technology. With 26 issued patents and extensive experience building recommendation systems and machine learning solutions at scale, Frank has a proven track record of translating complex technical concepts into accessible learning materials. His practical, hands-on approach has helped over one million students worldwide develop valuable skills in machine learning, data engineering, and AI development, making him a trusted guide for your journey into advanced AI technologies.